In a current mission, we have been tasked with designing how we’d exchange a

Mainframe system with a cloud native software, constructing a roadmap and a

enterprise case to safe funding for the multi-year modernisation effort

required. We have been cautious of the dangers and potential pitfalls of a Massive Design

Up Entrance, so we suggested our consumer to work on a ‘simply sufficient, and simply in

time’ upfront design, with engineering throughout the first section. Our consumer

favored our method and chosen us as their companion.

The system was constructed for a UK-based consumer’s Information Platform and

customer-facing merchandise. This was a really advanced and difficult activity given

the dimensions of the Mainframe, which had been constructed over 40 years, with a

number of applied sciences which have considerably modified since they have been

first launched.

Our method is predicated on incrementally shifting capabilities from the

mainframe to the cloud, permitting a gradual legacy displacement fairly than a

“Massive Bang” cutover. With the intention to do that we would have liked to determine locations within the

mainframe design the place we may create seams: locations the place we will insert new

habits with the smallest doable adjustments to the mainframe’s code. We will

then use these seams to create duplicate capabilities on the cloud, twin run

them with the mainframe to confirm their habits, after which retire the

mainframe functionality.

Thoughtworks have been concerned for the primary 12 months of the programme, after which we handed over our work to our consumer

to take it ahead. In that timeframe, we didn’t put our work into manufacturing, however, we trialled a number of

approaches that may enable you get began extra shortly and ease your personal Mainframe modernisation journeys. This

article gives an summary of the context by which we labored, and descriptions the method we adopted for

incrementally shifting capabilities off the Mainframe.

Contextual Background

The Mainframe hosted a various vary of

providers essential to the consumer’s enterprise operations. Our programme

particularly targeted on the information platform designed for insights on Customers

in UK&I (United Kingdom & Eire). This explicit subsystem on the

Mainframe comprised roughly 7 million strains of code, developed over a

span of 40 years. It supplied roughly ~50% of the capabilities of the UK&I

property, however accounted for ~80% of MIPS (Million directions per second)

from a runtime perspective. The system was considerably advanced, the

complexity was additional exacerbated by area duties and considerations

unfold throughout a number of layers of the legacy surroundings.

A number of causes drove the consumer’s resolution to transition away from the

Mainframe surroundings, these are the next:

- Adjustments to the system have been sluggish and costly. The enterprise due to this fact had

challenges maintaining tempo with the quickly evolving market, stopping

innovation. - Operational prices related to working the Mainframe system have been excessive;

the consumer confronted a industrial danger with an imminent value improve from a core

software program vendor. - While our consumer had the mandatory ability units for working the Mainframe,

it had confirmed to be laborious to search out new professionals with experience on this tech

stack, because the pool of expert engineers on this area is proscribed. Moreover,

the job market doesn’t provide as many alternatives for Mainframes, thus folks

should not incentivised to discover ways to develop and function them.

Excessive-level view of Shopper Subsystem

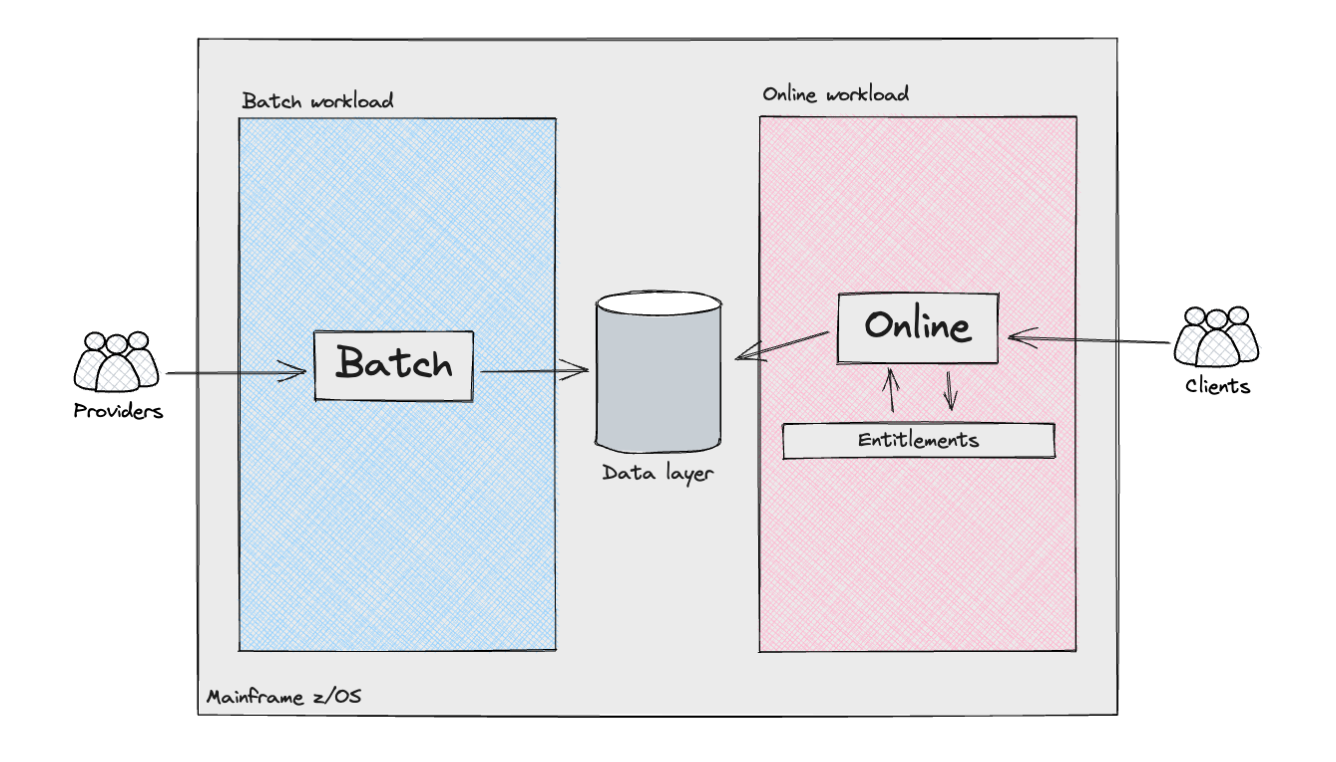

The next diagram reveals, from a high-level perspective, the assorted

parts and actors within the Shopper subsystem.

The Mainframe supported two distinct forms of workloads: batch

processing and, for the product API layers, on-line transactions. The batch

workloads resembled what is often known as a knowledge pipeline. They

concerned the ingestion of semi-structured knowledge from exterior

suppliers/sources, or different inner Mainframe methods, adopted by knowledge

cleaning and modelling to align with the necessities of the Shopper

Subsystem. These pipelines included numerous complexities, together with

the implementation of the Identification looking out logic: in the UK,

not like america with its social safety quantity, there isn’t any

universally distinctive identifier for residents. Consequently, firms

working within the UK&I need to make use of customised algorithms to precisely

decide the person identities related to that knowledge.

The net workload additionally offered vital complexities. The

orchestration of API requests was managed by a number of internally developed

frameworks, which decided this system execution circulation by lookups in

datastores, alongside dealing with conditional branches by analysing the

output of the code. We should always not overlook the extent of customisation this

framework utilized for every buyer. For instance, some flows have been

orchestrated with ad-hoc configuration, catering for implementation

particulars or particular wants of the methods interacting with our consumer’s

on-line merchandise. These configurations have been distinctive at first, however they

doubtless turned the norm over time, as our consumer augmented their on-line

choices.

This was carried out by way of an Entitlements engine which operated

throughout layers to make sure that clients accessing merchandise and underlying

knowledge have been authenticated and authorised to retrieve both uncooked or

aggregated knowledge, which might then be uncovered to them by way of an API

response.

Incremental Legacy Displacement: Rules, Advantages, and

Issues

Contemplating the scope, dangers, and complexity of the Shopper Subsystem,

we believed the next rules could be tightly linked with us

succeeding with the programme:

- Early Threat Discount: With engineering ranging from the

starting, the implementation of a “Fail-Quick” method would assist us

determine potential pitfalls and uncertainties early, thus stopping

delays from a programme supply standpoint. These have been: - End result Parity: The consumer emphasised the significance of

upholding end result parity between the present legacy system and the

new system (It is very important be aware that this idea differs from

Characteristic Parity). Within the consumer’s Legacy system, numerous

attributes have been generated for every shopper, and given the strict

business rules, sustaining continuity was important to make sure

contractual compliance. We would have liked to proactively determine

discrepancies in knowledge early on, promptly handle or clarify them, and

set up belief and confidence with each our consumer and their

respective clients at an early stage. - Cross-functional necessities: The Mainframe is a extremely

performant machine, and there have been uncertainties {that a} resolution on

the Cloud would fulfill the Cross-functional necessities. - Ship Worth Early: Collaboration with the consumer would

guarantee we may determine a subset of probably the most vital Enterprise

Capabilities we may ship early, making certain we may break the system

aside into smaller increments. These represented thin-slices of the

total system. Our purpose was to construct upon these slices iteratively and

steadily, serving to us speed up our total studying within the area.

Moreover, working by way of a thin-slice helps cut back the cognitive

load required from the staff, thus stopping evaluation paralysis and

making certain worth could be constantly delivered. To attain this, a

platform constructed across the Mainframe that gives higher management over

purchasers’ migration methods performs a significant position. Utilizing patterns comparable to

Darkish Launching and Canary

Launch would place us within the driver’s seat for a easy

transition to the Cloud. Our purpose was to attain a silent migration

course of, the place clients would seamlessly transition between methods

with none noticeable influence. This might solely be doable by way of

complete comparability testing and steady monitoring of outputs

from each methods.

With the above rules and necessities in thoughts, we opted for an

Incremental Legacy Displacement method at the side of Twin

Run. Successfully, for every slice of the system we have been rebuilding on the

Cloud, we have been planning to feed each the brand new and as-is system with the

similar inputs and run them in parallel. This enables us to extract each

methods’ outputs and test if they’re the identical, or no less than inside an

acceptable tolerance. On this context, we outlined Incremental Twin

Run as: utilizing a Transitional

Structure to help slice-by-slice displacement of functionality

away from a legacy surroundings, thereby enabling goal and as-is methods

to run quickly in parallel and ship worth.

We determined to undertake this architectural sample to strike a stability

between delivering worth, discovering and managing dangers early on,

making certain end result parity, and sustaining a easy transition for our

consumer all through the length of the programme.

Incremental Legacy Displacement method

To perform the offloading of capabilities to our goal

structure, the staff labored intently with Mainframe SMEs (Topic Matter

Specialists) and our consumer’s engineers. This collaboration facilitated a

simply sufficient understanding of the present as-is panorama, by way of each

technical and enterprise capabilities; it helped us design a Transitional

Structure to attach the present Mainframe to the Cloud-based system,

the latter being developed by different supply workstreams within the

programme.

Our method started with the decomposition of the

Shopper subsystem into particular enterprise and technical domains, together with

knowledge load, knowledge retrieval & aggregation, and the product layer

accessible by way of external-facing APIs.

Due to our consumer’s enterprise

function, we recognised early that we may exploit a serious technical boundary to organise our programme. The

consumer’s workload was largely analytical, processing principally exterior knowledge

to provide perception which was bought on to purchasers. We due to this fact noticed an

alternative to separate our transformation programme in two components, one round

knowledge curation, the opposite round knowledge serving and product use instances utilizing

knowledge interactions as a seam. This was the primary excessive stage seam recognized.

Following that, we then wanted to additional break down the programme into

smaller increments.

On the information curation aspect, we recognized that the information units have been

managed largely independently of one another; that’s, whereas there have been

upstream and downstream dependencies, there was no entanglement of the datasets throughout curation, i.e.

ingested knowledge units had a one to 1 mapping to their enter recordsdata.

.

We then collaborated intently with SMEs to determine the seams

throughout the technical implementation (laid out beneath) to plan how we may

ship a cloud migration for any given knowledge set, ultimately to the extent

the place they could possibly be delivered in any order (Database Writers Processing Pipeline Seam, Coarse Seam: Batch Pipeline Step Handoff as Seam,

and Most Granular: Information Attribute

Seam). So long as up- and downstream dependencies may alternate knowledge

from the brand new cloud system, these workloads could possibly be modernised

independently of one another.

On the serving and product aspect, we discovered that any given product used

80% of the capabilities and knowledge units that our consumer had created. We

wanted to discover a completely different method. After investigation of the way in which entry

was bought to clients, we discovered that we may take a “buyer section”

method to ship the work incrementally. This entailed discovering an

preliminary subset of shoppers who had bought a smaller proportion of the

capabilities and knowledge, lowering the scope and time wanted to ship the

first increment. Subsequent increments would construct on high of prior work,

enabling additional buyer segments to be reduce over from the as-is to the

goal structure. This required utilizing a distinct set of seams and

transitional structure, which we focus on in Database Readers and Downstream processing as a Seam.

Successfully, we ran an intensive evaluation of the parts that, from a

enterprise perspective, functioned as a cohesive entire however have been constructed as

distinct components that could possibly be migrated independently to the Cloud and

laid this out as a programme of sequenced increments.