Apple introduced the M4 chip, a strong new improve that can arrive in next-generation iPad (and, additional down the road, the greatest Macbooks and Macs). You possibly can try our beat-by-beat protection of the Apple occasion, however one ingredient of the presentation has left some customers confused: what precisely does TOPS imply?

TOPS is an acronym for ‘trillion operations per second’, and is basically a hardware-specific measure of AI capabilities. Extra TOPS means quicker on-chip AI efficiency, on this case the Neural Engine discovered on the Apple M4 chip.

The M4 chip is able to 38 TOPS – that is 38,000,000,000,000 operations per second. If that appears like a staggeringly large quantity, nicely, it’s! Trendy neural processing models (NPUs) like Apple’s Neural Engine are advancing at an extremely fast charge; for instance, Apple’s personal A16 Bionic chip, which debuted within the iPhone 14 Professional lower than two years in the past, supplied 17 TOPS.

Apple’s new chip is not even probably the most highly effective AI chip about to hit the market – Qualcomm’s upcoming Snapdragon X Elite purportedly gives 45 TOPS, and is anticipated to land in Home windows laptops later this yr.

How is TOPS calculated?

The processes by which we measure AI efficiency are nonetheless in relative infancy, however TOPS offers a helpful and user-accessible metric for discerning how ‘good’ at dealing with AI instruments a given processor is.

I am about to get technical, so if you happen to do not care concerning the arithmetic, be happy to skip forward to the subsequent part! The present business commonplace for calculating TOPS is TOPS = 2 × MAC unit depend × Frequency / 1 trillion. ‘MAC’ stands for multiply-accumulate; a MAC operation is principally a pair of calculations (a multiplication and an addition) which can be run by every MAC unit on the processor as soon as each clock cycle, powering the formulation that make AI fashions perform. Each NPU has a set variety of MAC models decided by the NPU’s microarchitecture.

‘Frequency’ right here is outlined by the clock pace of the processor in query – particularly, what number of cycles it will probably course of per second. It is a widespread metric additionally utilized in CPUs, GPUs, and different parts, primarily denoting how ‘quick’ the part is.

So, to calculate what number of operations per second an NPU can deal with, we merely multiply the MAC unit depend by 2 for our variety of operations, then multiply that by the frequency. This provides us an ‘OPS’ determine, which we then divide by a trillion to make it a bit extra palatable (and kinder in your zero key when typing it out).

Merely put, extra TOPS means higher, quicker AI efficiency.

Why is TOPS necessary?

TOPS is, within the easiest potential phrases, our present greatest method to decide the efficiency of a tool for working native AI workloads. This is applicable each to the business and the broader public; it is a easy quantity that lets professionals and shoppers instantly evaluate the baseline AI efficiency of various units.

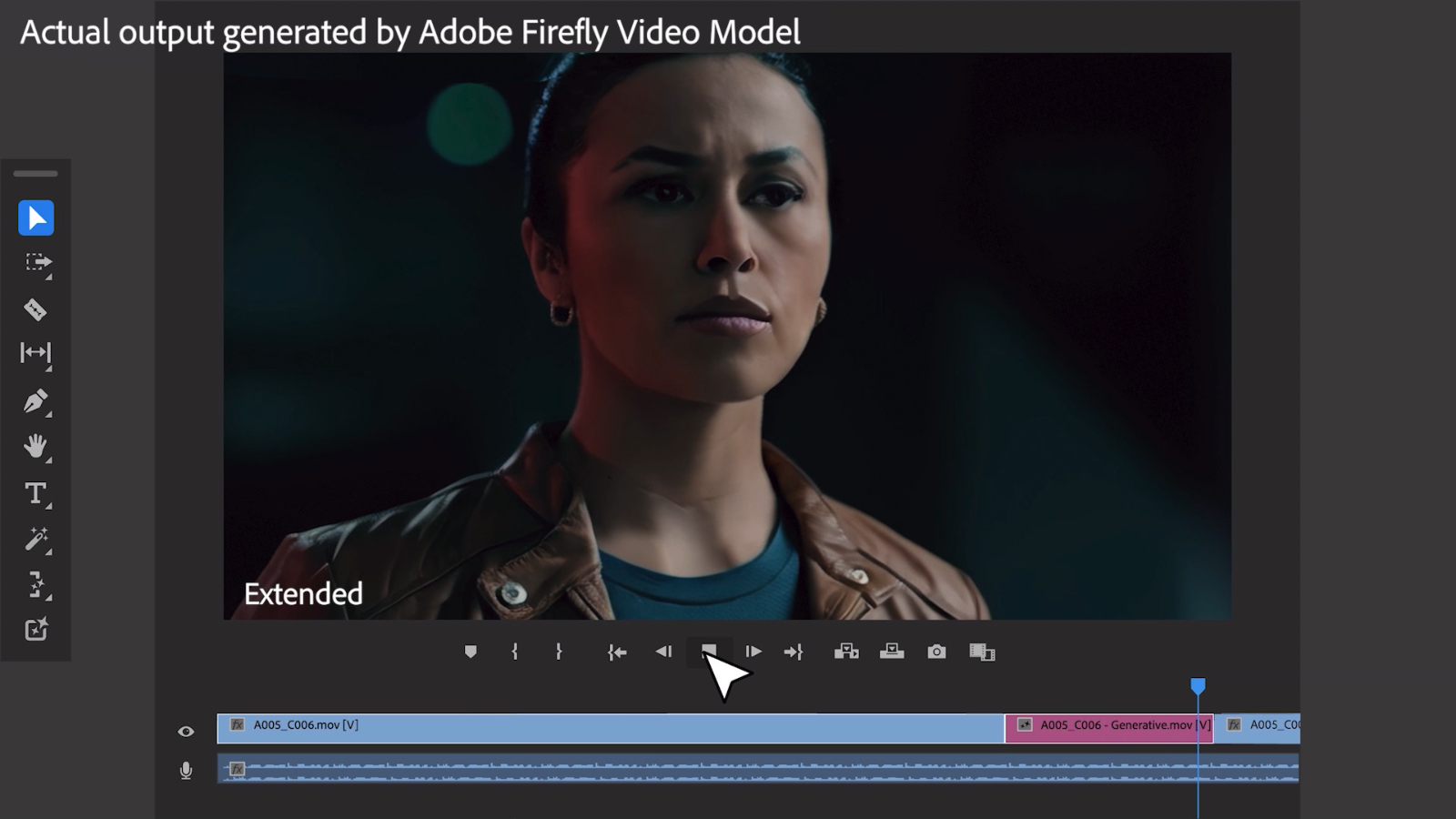

TOPS is just relevant for on-device AI, which means that cloud-based AI instruments (just like the web’s favourite AI bot, ChatGPT) do not sometimes profit from higher TOPS. Nonetheless, native AI is turning into increasingly more prevalent, with in style skilled software program just like the Adobe Artistic Cloud suite beginning to implement extra AI-powered options that rely on the capabilities of your machine.

It must be famous that TOPS is under no circumstances an ideal metric. On the finish of the day, it is a theoretical determine derived from {hardware} statistics and might differ enormously from real-world efficiency. Elements resembling energy availability, thermal techniques, and overclocking can impression the precise pace at which an NPU can run AI workloads.

To that finish, although, we’re now beginning to see AI benchmarks crop up, resembling Procyon AI from UL Benchmarks (makers of the favored 3DMark and PCMark benchmarking packages). These can present a way more life like concept of how nicely a You possibly can count on to see TechRadar working AI efficiency assessments as a part of our evaluate benchmarking within the close to future!