I vividly bear in mind one in every of my first sightings of a big software program challenge.

I used to be taking a summer season internship at a big English electronics firm. My

supervisor, a part of the QA group, gave me a tour of a website and we entered a

big, miserable, windowless warehouse full of individuals working in cubicles.

I used to be advised that these

programmers had been writing code for this software program for a few years,

and whereas they had been achieved programming, their separate models had been now being

built-in collectively, they usually had been integrating for a number of months. My

information advised me that no person actually knew how lengthy it will take to complete

integrating. From this I realized a standard story of software program initiatives:

integrating the work of a number of builders is an extended and unpredictable

course of.

I have never heard of a workforce trapped in such an extended integration like this

for a few years, however that does not imply that integration is a painless

course of. A developer could have been working for a number of days on a brand new

characteristic, usually pulling modifications from a standard major department into her

characteristic department. Simply earlier than she’s able to push her modifications, a giant change

lands on major, one which alters some code that she’s interacting with. She

has to alter from ending off her characteristic to determining the best way to

combine her work with this transformation, which whereas higher for her colleague,

would not work so nicely for her. Hopefully the complexities of the change will

be in merging the supply code, not an insidious fault that solely reveals when

she runs the applying, forcing her to debug unfamiliar code.

Not less than in that situation, she will get to search out out earlier than she submits her

pull request. Pull requests may be fraught sufficient whereas ready for somebody

to overview a change. The overview can take time, forcing her to context-switch

from her subsequent characteristic. A troublesome integration throughout that interval may be very

disconcerting, dragging out the overview course of even longer. And that will not

even the be the top of story, since integration assessments are sometimes solely run

after the pull request is merged.

In time, this workforce could be taught that making important modifications to core code

causes this type of drawback, and thus stops doing it. However that, by

stopping common refactoring, finally ends up permitting

cruft to develop all through the codebase. People who encounter a crufty

code base surprise the way it acquired into such a state, and infrequently the reply lies in

an integration course of with a lot friction that it discourages folks from

eradicating that cruft.

However this needn’t be the way in which. Most initiatives achieved by my colleagues

at Thoughtworks, and by many others all over the world, deal with

integration as a non-event. Any particular person developer’s work is

just a few hours away from a shared challenge state and may be

built-in again into that state in minutes. Any integration errors

are discovered quickly and may be mounted quickly.

This distinction is not the results of an costly and complicated

instrument. The essence of it lies within the easy apply of everybody on

the workforce integrating continuously, a minimum of each day, towards a

managed supply code repository. This apply known as “Steady

Integration” (or in some circles it’s known as “Trunk-Based mostly Improvement”).

On this article, I clarify what Steady Integration is and the best way to do

it nicely. I’ve written it for 2 causes. Firstly there are at all times new folks

coming into the occupation and I wish to present them how they will keep away from that

miserable warehouse. However secondly this subject wants readability as a result of

Steady Integration is a a lot misunderstood idea. There are various

individuals who say that they’re doing Steady Integration, however as soon as they describe

their workflow, it turns into clear that they’re lacking essential items. A

clear understanding of Steady Integration helps us talk, so we all know

what to anticipate after we describe our approach of working. It additionally helps people

understand that there are additional issues they will do to enhance their expertise.

I initially wrote this text in 2001, with an replace in 2006. Since

then a lot has modified in standard expectations of software program improvement groups.

The various-month integration that I noticed within the Eighties is a distant reminiscence,

applied sciences corresponding to model management and construct scripts have grow to be

commonplace. I rewrote this text once more in 2023 to raised deal with the

improvement groups of that point, with twenty years of expertise to

verify the worth of Steady Integration.

Constructing a Function with Steady Integration

The best approach for me to elucidate what Steady Integration is and the way it works is to

present a fast instance of the way it works with the event of a small

characteristic. I am at the moment working with a serious producer of magic potions, we

are extending their product high quality system to calculate how lengthy the

potion’s impact will final. We have already got a dozen potions supported in

the system, and we have to prolong the logic for flying potions. (We have

realized that having them put on off too early severely impacts buyer

retention.) Flying potions introduce a number of new elements to care for,

one in every of which is the moon part throughout secondary mixing.

I start by taking a duplicate of the newest product sources

onto my native improvement surroundings. I do that by trying out the

present mainline from the central repository with

git pull.

As soon as the supply is in my surroundings, I execute a command to construct

the product. This command checks that my surroundings is about up appropriately, does

any compilation of the sources into an executable product, begins the

product, and runs a complete suite of assessments towards it. This could

take just a few minutes, whereas I begin poking across the code to

resolve the best way to start including the brand new characteristic. This construct infrequently fails,

however I do it simply in case, as a result of if it does fail, I wish to know earlier than I

begin making modifications. If I make modifications on high of a failing construct, I am going to

get confused considering it was my modifications that brought about the failure.

Now I take my working copy and do no matter I have to do to cope with

the moon phases. This may encompass each altering the product code, and

additionally including or altering a few of the automated assessments. Throughout that point I

run the automated construct and assessments continuously. After an hour or so I’ve

the moon logic integrated and assessments up to date.

I am now able to combine my modifications again into the central repository. My

first step for that is to drag once more, as a result of it is potential, certainly

possible, that my colleagues could have pushed modifications into the mainline

whereas I have been working. Certainly there are a few such modifications, which

I pull into my working copy. I mix my modifications on high of them and run

the construct once more. Normally this feels superfluous, however this time a take a look at

fails. The take a look at provides me some clue about what’s gone mistaken, however I discover it

extra helpful to have a look at the commits that I pulled to see what modified. It

appears that somebody has made an adjustment to a operate, transferring a few of its

logic out into its callers. They mounted all of the callers within the mainline

code, however I added a brand new name in my modifications that, in fact, they could not

see but. I make the identical adjustment and rerun the construct, which passes this

time.

Since I used to be a couple of minutes sorting that out, I pull once more, and once more

there is a new commit. Nonetheless the construct works tremendous with this one, so I am

capable of git push my change as much as the central repository.

Nonetheless my push doesn’t suggest I am achieved. As soon as I’ve pushed to the mainline

a Steady Integration Service notices my commit, checks out the modified

code onto a CI agent, and builds it there. For the reason that construct was

tremendous in my surroundings I do not count on it to fail on the CI Service,

however there’s a cause that “works on my machine” is a well known

phrase in programmer circles. It is uncommon that one thing will get missed that

causes the CI Companies construct to fail, however uncommon is just not the identical

as by no means.

The mixing machine’s construct would not take lengthy, but it surely’s lengthy sufficient

that an keen developer could be beginning to consider the subsequent step in

calculating flight time. However I am an outdated man, so get pleasure from a couple of minutes to

stretch my legs and browse an electronic mail. I quickly get a notification from the CI

service that each one is nicely, so I begin the method once more for the subsequent a part of

the change.

Practices of Steady Integration

The story above is an illustration of Steady Integration that

hopefully provides you a really feel of what it is like for an abnormal programmer to

work with. However, as with something, there’s fairly a number of issues to kind out

when doing this in each day work. So now we’ll undergo the important thing practices

that we have to do.

Put all the things in a model managed mainline

As of late nearly each software program workforce retains their supply code in a

model management system, so that each developer can simply discover not simply

the present state of the product, however all of the modifications which have been

made to the product. Model management instruments permit a system to be rolled

again to any level in its improvement, which may be very useful to

perceive the historical past of the system, utilizing Diff Debugging to search out bugs. As I write this, the dominant

model management system is git.

However whereas model management is commonplace, some groups fail to

take full benefit of model management.

My take a look at for full model management is that I ought to be capable of stroll

up with a really minimally configured surroundings – say a laptop computer with no

greater than the vanilla working system put in – and be capable of simply

construct, and run the product after cloning the repository. This implies the

repository ought to reliably return product supply code, assessments, database

schema, take a look at information, configuration information, IDE configurations, set up

scripts, third-party libraries, and any instruments required to construct the

software program.

I ought to be capable of stroll up with a laptop computer loaded with solely an

working system, and by utilizing the repository, get hold of all the things I have to

construct and run the product.

You would possibly discover I stated that the repository ought to return all

of those components, which is not the identical as storing them. We do not have

to retailer the compiler within the repository, however we’d like to have the ability to

get on the proper compiler. If I take a look at final yr’s product sources, I

may have to have the ability to construct them with the compiler I used to be utilizing final yr,

not the model I am utilizing now. The repository can do that by storing a

hyperlink to immutable asset storage – immutable within the sense that when an

asset is saved with an id, I am going to at all times get precisely that asset again

once more. I may do that with library code, offering I each belief the

asset storage and at all times reference a specific model, by no means “the newest

model”.

Related asset storage schemes can be utilized for something too giant,

corresponding to movies. Cloning a repository usually means grabbing all the things,

even when it is not wanted. By utilizing references to an asset retailer, the

construct scripts can select to obtain solely what’s wanted for a specific

construct.

Typically we should always retailer in supply management all the things we have to

construct something, however nothing that we really construct. Some folks do hold

the construct merchandise in supply management, however I think about that to be a odor

– a sign of a deeper drawback, often an lack of ability to reliably

recreate builds. It may be helpful to cache construct merchandise, however they

ought to at all times be handled as disposable, and it is often good to then

guarantee they’re eliminated promptly so that individuals do not depend on them when

they should not.

A second factor of this precept is that it must be straightforward to search out

the code for a given piece of labor. A part of that is clear names and URL

schemes, each throughout the repository and throughout the broader enterprise.

It additionally means not having to spend time determining which department inside

the model management system to make use of. Steady Integration depends on

having a transparent mainline – a single,

shared, department that acts as the present state of the product. That is

the subsequent model that will likely be deployed to manufacturing.

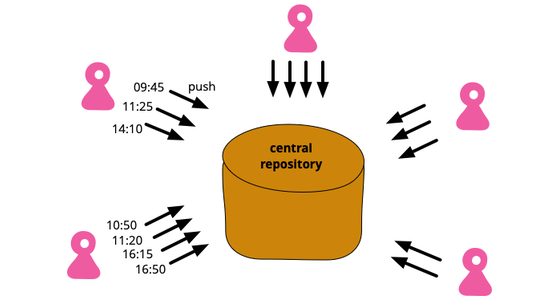

Groups that use git principally use the identify “major” for the mainline

department, however we additionally generally see

“trunk” or the

outdated default of “grasp”. The mainline is that department on the central repository,

so so as to add a decide to a mainline known as major I have to first decide to my

native copy of major after which push that decide to the central server. The

monitoring department (known as one thing like origin/major) is a duplicate of the

mainline on my native machine. Nonetheless it might be outdated, since in a

Steady Integration surroundings there are lots of commits pushed into

mainline every single day.

As a lot as potential, we should always use textual content information to outline the product

and its surroundings. I say this as a result of, though version-control

methods can retailer and monitor non-text information, they do not often present any

facility to simply see the distinction between variations.

This makes it a lot tougher to know what change was made.

It is potential that sooner or later we’ll see extra storage codecs

having the power to create significant diffs, however in the intervening time clear

diffs are nearly totally reserved for textual content codecs. Even there we’d like

to make use of textual content codecs that can produce understandable diffs.

Automate the Construct

Turning the supply code right into a operating system can usually be a

difficult course of involving compilation, transferring information round, loading

schemas into databases, and so forth. Nonetheless like most duties on this

a part of software program improvement it may be automated – and consequently

must be automated. Asking folks to kind in unusual instructions or

clicking by dialog packing containers is a waste of time and a breeding floor

for errors.

Computer systems are designed to carry out easy, repetitive duties. As quickly

as you will have people doing repetitive duties on behalf of computer systems, all

the computer systems get collectively late at evening and snicker at you.

Most fashionable programming environments embody tooling for automating

builds, and such instruments have been round for a very long time. I first encountered

them with make, one of many earliest Unix

instruments.

Any directions for the construct should be saved within the repository,

in apply because of this we should use textual content representations. That approach

we will simply examine them to see how they work, and crucially, see

diffs once they change. Thus groups utilizing Steady Integration keep away from

instruments that require clicking round in UIs to carry out a construct or to

configure an surroundings.

It is potential to make use of a daily programming language to automate

builds, certainly easy builds are sometimes captured as shell scripts. However as

builds get extra difficult it is higher to make use of a instrument that is designed

with construct automation in thoughts. Partly it’s because such instruments will

have built-in features for frequent construct duties. However the principle cause is

that construct instruments work finest with a specific method to arrange their logic

– an alternate computational mannequin that I confer with as a Dependency Community. A dependency community organizes

its logic into duties that are structured as a graph of dependencies.

A trivially easy dependency community would possibly say that the “take a look at” activity is

dependent upon the “compile” activity. If I invoke the take a look at activity, it can

look to see if the compile activity must be run and if that’s the case invoke it

first. Ought to the compile activity itself have dependencies, the community will look to see if

it must invoke them first, and so forth backwards alongside the dependency

chain. A dependency community like that is helpful for construct scripts

as a result of usually duties take a very long time, which is wasted if they are not

wanted. If no person has modified any supply information since I final ran the

assessments, then I can save doing a probably lengthy compilation.

To inform if a activity must be run, the most typical and

simple approach is to have a look at the modification instances of information. If any

of the enter information to the compilation have been modified later than the

output, then we all know the compilation must be executed if that activity

is invoked.

A standard mistake is to not embody all the things within the automated construct.

The construct ought to embody getting the database schema out of the

repository and firing it up within the execution surroundings. I am going to elaborate

my earlier rule of thumb: anybody ought to be capable of herald a clear

machine, examine the sources out of the repository, challenge a single

command, and have a operating system on their very own surroundings.

Whereas a easy program could solely want a line or two of script file to

construct, advanced methods usually have a big graph of dependencies, finely

tuned to attenuate the period of time required to construct issues. This

web site, for instance, has over a thousand net pages. My construct system

is aware of that ought to I alter the supply for this web page, I solely should construct

this one web page. However ought to I alter a core file within the publication

instrument chain, then it must rebuild all of them. Both approach, I invoke the

identical command in my editor, and the construct system figures out how a lot to do.

Relying on what we’d like, we may have totally different sorts of issues to

be constructed. We are able to construct a system with or with out take a look at code, or with

totally different units of assessments. Some parts may be constructed stand-alone. A

construct script ought to permit us to construct various targets for various

instances.

Make the Construct Self-Testing

Historically a construct meant compiling, linking, and all of the

further stuff required to get a program to execute. A program could

run, however that does not imply it does the fitting factor. Fashionable statically

typed languages can catch many bugs, however way more slip by that web.

This can be a important challenge if we wish to combine as continuously as

Steady Integration calls for. If bugs make their approach into the product,

then we’re confronted with the daunting activity of performing bug fixes on a

rapidly-changing code base. Handbook testing is just too sluggish to deal with the

frequency of change.

Confronted with this, we have to be sure that bugs do not get into the

product within the first place. The principle method to do this can be a

complete take a look at suite, one that’s run earlier than every integration to

flush out as many bugs as potential. Testing is not excellent, in fact,

however it may possibly catch a whole lot of bugs – sufficient to be helpful. Early computer systems I

used did a visual reminiscence self-test once they had been booting up, which led

me referring to this as Self Testing Code.

Writing self-testing code impacts a programmer’s workflow. Any

programming activity combines each modifying the performance of the

program, and in addition augmenting the take a look at suite to confirm this modified

habits. A programmer’s job is not achieved merely when the brand new

characteristic is working, but in addition once they have automated assessments to show it.

Over the twenty years for the reason that first model of this text, I’ve

seen programming environments more and more embrace the necessity to present

the instruments for programmers to construct such take a look at suites. The most important push

for this was JUnit, initially written by Kent Beck and Erich Gamma,

which had a marked impression on the Java group within the late Nineteen Nineties. This

impressed related testing frameworks for different languages, usually referred

to as Xunit frameworks. These careworn a

lightweight, programmer-friendly mechanics that allowed a programmer to

simply construct assessments in live performance with the product code. Typically these instruments

have some sort of graphical progress bar that’s inexperienced if the assessments move,

however turns pink ought to any fail – resulting in phrases like “inexperienced construct”,

or “red-bar”.

A sound take a look at suite would by no means permit a mischievous imp to do

any injury with no take a look at turning pink.

The take a look at of such a take a look at suite is that we must be assured that if the

assessments are inexperienced, then no important bugs are within the product. I prefer to

think about a mischievous imp that is ready to make easy modifications to

the product code, corresponding to commenting out strains, or reversing

conditionals, however is just not capable of change the assessments. A sound take a look at suite

would by no means permit the imp to do any injury with no take a look at turning

pink. And any take a look at failing is sufficient to fail the construct, 99.9% inexperienced is

nonetheless pink.

Self-testing code is so essential to Steady Integration that it’s a

essential prerequisite. Typically the most important barrier to implementing

Steady Integration is inadequate ability at testing.

That self-testing code and Steady Integration are so tied

collectively is not any shock. Steady Integration was initially developed

as a part of Excessive Programming and testing has at all times

been a core apply of Excessive Programming. This testing is commonly achieved

within the type of Check Pushed Improvement (TDD), a apply that

instructs us to by no means write new code except it fixes a take a look at that we have

written simply earlier than. TDD is not important for Steady Integration, as

assessments may be written after manufacturing code so long as they’re achieved

earlier than integration. However I do discover that, more often than not, TDD is one of the best

method to write self-testing code.

The assessments act as an automatic examine of the well being of the code

base, and whereas assessments are the important thing factor of such an automatic

verification of the code, many programming environments present further

verification instruments. Linters can detect poor programming practices,

and guarantee code follows a workforce’s most popular formatting

type, vulnerability scanners can discover safety weaknesses. Groups ought to

consider these instruments to incorporate them within the verification course of.

After all we will not rely on assessments to search out all the things. Because it’s usually

been stated: assessments do not show the absence of bugs. Nonetheless perfection

is not the one level at which we get payback for a self-testing construct.

Imperfect assessments, run continuously, are a lot better than excellent assessments that

are by no means written in any respect.

Everybody Pushes Commits To the Mainline Each Day

No code sits unintegrated for greater than a few hours.

Integration is primarily about communication. Integration

permits builders to inform different builders concerning the modifications

they’ve made. Frequent communication permits folks to know

rapidly as modifications develop.

The one prerequisite for a developer committing to the

mainline is that they will appropriately construct their code. This, of

course, contains passing the construct assessments. As with all commit

cycle the developer first updates their working copy to match

the mainline, resolves any conflicts with the mainline, then

builds on their native machine. If the construct passes, then they

are free to push to the mainline.

If everybody pushes to the mainline continuously, builders rapidly discover out if

there is a battle between two builders. The important thing to fixing issues

rapidly is discovering them rapidly. With builders committing each few

hours a battle may be detected inside a number of hours of it occurring, at

that time not a lot has occurred and it is simple to resolve. Conflicts

that keep undetected for weeks may be very laborious to resolve.

Conflicts within the codebase come in several kinds. The best to

discover and resolve are textual conflicts, usually known as “merge conflicts”,

when two builders edit the

identical fragment of code in several methods. Model-control instruments detect

these simply as soon as the second developer pulls the up to date mainline into

their working copy. The tougher drawback are Semantic Conflicts. If my colleague modifications the

identify of a operate and I name that operate in my newly added code,

the version-control system can not help us. In a statically typed language

we get a compilation failure, which is fairly straightforward to detect, however in a

dynamic language we get no such assist. And even statically-typed

compilation would not assist us when a colleague makes a change to the physique

of a operate that I name, making a delicate change to what it does. This

is why it is so essential to have self-testing code.

A take a look at failure alerts that there is a battle between modifications, however we

nonetheless have to determine what the battle is and the best way to resolve it.

Since there’s just a few hours of modifications between commits, there’s solely

so many locations the place the issue might be hiding. Moreover since not

a lot has modified we will use Diff Debugging to assist us discover the

bug.

My normal rule of thumb is that each developer ought to decide to the

mainline every single day. In apply, these skilled with Steady

Integration combine extra continuously than that. The extra continuously we

combine, the much less locations we have now to search for battle errors, and the

extra quickly we repair conflicts.

Frequent commits encourage builders to interrupt down their

work into small chunks of some hours every. This helps

monitor progress and gives a way of progress. Typically folks

initially really feel they can not do one thing significant in only a few

hours, however we have discovered that mentoring and apply helps us be taught.

Each Push to Mainline Ought to Set off a Construct

If everybody on the workforce integrates a minimum of each day, this should imply

that the mainline stays in a wholesome state. In apply, nonetheless, issues

nonetheless do go mistaken. This can be as a consequence of lapses in self-discipline, neglecting

to replace and construct earlier than a push, there can also be environmental

variations between developer workspaces.

We thus want to make sure that each commit is verified in a reference

surroundings. The standard approach to do that is with a Steady Integration

Service (CI Service) that displays the mainline. (Examples of CI

Companies are instruments like Jenkins, GitHub Actions, Circle CI and so on.) Each time

the mainline receives a commit, the CI service checks out the pinnacle of the

mainline into an integration surroundings and performs a full construct. Solely

as soon as this integration construct is inexperienced can the developer think about the

integration to be full. By guaranteeing we have now a construct with each push,

ought to we get a failure, we all know that the fault lies in that newest

push, narrowing down the place should look to repair it.

I wish to stress right here that after we use a CI Service, we solely apply it to

the mainline, which is the principle department on the reference occasion of the

model management system. It is common to make use of a CI service to watch and construct

from a number of branches, however the entire level of integration is to have

all commits coexisting on a single department. Whereas it might be helpful to make use of

CI service to do an automatic construct for various branches, that is not

the identical as Steady Integration, and groups utilizing Steady

Integration will solely want the CI service to watch a single department of

the product.

Whereas nearly all groups use CI Companies lately, it’s

completely

potential to do Steady Integration with out one. Group members can

manually take a look at the pinnacle on the mainline onto an integration machine

and carry out a construct to confirm the mixing. However there’s little level

in a guide course of when automation is so freely accessible.

(That is an acceptable level to say that my colleagues at

Thoughtworks, have contributed a whole lot of open-source tooling for

Steady Integration, particularly Cruise Management – the primary CI

Service.)

Repair Damaged Builds Instantly

Steady Integration can solely work if the mainline is saved in a

wholesome state. Ought to the mixing construct fail, then it must be

mounted immediately. As Kent Beck places it: “no person has a

greater precedence activity than fixing the construct”. This does not imply

that everybody on the workforce has to cease what they’re doing in

order to repair the construct, often it solely wants a few

folks to get issues working once more. It does imply a acutely aware

prioritization of a construct repair as an pressing, excessive precedence

activity

Normally one of the simplest ways to repair the construct is to revert the

defective commit from the mainline, permitting the remainder of the workforce to

proceed working.

Normally one of the simplest ways to repair the construct is to revert the newest commit

from the mainline, taking the system again to the last-known good construct.

If the reason for the issue is straight away apparent then it may be mounted

instantly with a brand new commit, however in any other case reverting the mainline permits

some people to determine the issue in a separate improvement

surroundings, permitting the remainder of the workforce to proceed to work with the

mainline.

Some groups desire to take away all danger of breaking the mainline by

utilizing a Pending Head (additionally known as Pre-tested, Delayed,

or Gated Commit.) To do that the CI service must set issues up in order that

commits pushed to the mainline for integration don’t instantly go

onto the mainline. As an alternative they’re positioned on one other department till the

construct completes and solely migrated to the mainline after a inexperienced construct.

Whereas this system avoids any hazard to mainline breaking, an

efficient workforce ought to hardly ever see a pink mainline, and on the few instances it

occurs its very visibility encourages people to discover ways to keep away from

it.

Hold the Construct Quick

The entire level of Steady Integration is to supply fast

suggestions. Nothing sucks the blood of Steady Integration

greater than a construct that takes a very long time. Right here I have to admit a sure

crotchety outdated man amusement at what’s thought-about to be an extended construct.

Most of my colleagues think about a construct that takes an hour to be completely

unreasonable. I bear in mind groups dreaming that they might get it so quick –

and sometimes we nonetheless run into instances the place it’s totally laborious to get

builds to that velocity.

For many initiatives, nonetheless, the XP guideline of a ten

minute construct is completely inside cause. Most of our fashionable

initiatives obtain this. It is value placing in concentrated

effort to make it occur, as a result of each minute chiseled off

the construct time is a minute saved for every developer each time

they commit. Since Steady Integration calls for frequent commits, this provides up

to a whole lot of the time.

If we’re observing a one hour construct time, then attending to

a sooner construct could look like a frightening prospect. It will probably even

be formidable to work on a brand new challenge and take into consideration the best way to

hold issues quick. For enterprise purposes, a minimum of, we have

discovered the same old bottleneck is testing – significantly assessments

that contain exterior providers corresponding to a database.

Most likely essentially the most essential step is to start out working

on establishing a Deployment Pipeline. The thought behind a

deployment pipeline (often known as construct

pipeline or staged construct) is that there are in reality

a number of builds achieved in sequence. The decide to the mainline triggers

the primary construct – what I name the commit construct. The commit

construct is the construct that is wanted when somebody pushes commits to the

mainline. The commit construct is the one which must be achieved rapidly, as a

end result it can take various shortcuts that can scale back the power

to detect bugs. The trick is to steadiness the wants of bug discovering and

velocity so {that a} good commit construct is steady sufficient for different folks to

work on.

As soon as the commit construct is sweet then different folks can work on

the code with confidence. Nonetheless there are additional, slower,

assessments that we will begin to do. Extra machines can run

additional testing routines on the construct that take longer to

do.

A easy instance of this can be a two stage deployment pipeline. The

first stage would do the compilation and run assessments which are extra

localized unit assessments with sluggish providers changed by Check Doubles, corresponding to a faux in-memory database or

a stub for an exterior service. Such

assessments can run very quick, holding throughout the ten minute guideline.

Nonetheless any bugs that contain bigger scale interactions, significantly

these involving the true database, will not be discovered. The second stage

construct runs a distinct suite of assessments that do hit an actual database and

contain extra end-to-end habits. This suite would possibly take a few

hours to run.

On this situation folks use the primary stage because the commit construct and

use this as their major CI cycle.

If the secondary construct fails, then this will likely not have

the identical ‘cease all the things’ high quality, however the workforce does purpose to repair such

bugs as quickly as potential, whereas holding the commit construct operating.

For the reason that secondary construct could also be a lot slower, it might not run after each

commit. In that case it runs as usually as it may possibly, selecting the final good

construct from the commit stage.

If the secondary construct detects a bug, that is an indication that the commit

construct might do with one other take a look at. As a lot as potential we wish to guarantee

that any later-stage failure results in new assessments within the commit construct that

would have caught the bug, so the bug stays mounted within the commit construct.

This fashion the commit assessments are strengthened at any time when one thing will get previous

them. There are instances the place there isn’t any method to construct a fast-running take a look at

that exposes the bug, so we could resolve to solely take a look at for that situation

within the secondary construct. More often than not, fortuitously, we will add appropriate

assessments to the commit construct.

One other method to velocity issues up is to make use of parallelism and a number of

machines. Cloud environments, particularly, permit groups to simply spin

up a small fleet of servers for builds. Offering the assessments can run

moderately independently, which well-written assessments can, then utilizing such

a fleet can get very fast construct instances. Such parallel cloud builds could

even be worthwhile to a developer’s pre-integration construct too.

Whereas we’re contemplating the broader construct course of, it is value

mentioning one other class of automation, interplay with

dependencies. Most software program makes use of a wide range of dependent software program

produced by totally different organizations. Adjustments in these dependencies can

trigger breakages within the product. A workforce ought to thus mechanically examine

for brand spanking new variations of dependencies and combine them into the construct,

basically as in the event that they had been one other workforce member. This must be achieved

continuously, often a minimum of each day, relying on the speed of change of

the dependencies. The same method must be used with operating

Contract Assessments. If these dependency

interactions go pink, they do not have the identical “cease the road” impact as

common construct failures, however do require immediate motion by the workforce to

examine and repair.

Disguise Work-in-Progress

Steady Integration means integrating as quickly as there’s a little

ahead progress and the construct is wholesome. Continuously this means

integrating earlier than a user-visible characteristic is absolutely shaped and prepared for

launch. We thus want to think about the best way to cope with latent code: code

that is a part of an unfinished characteristic that is current in a dwell

launch.

Some folks fear about latent code, as a result of it is placing

non-production high quality code into the launched executable. Groups doing

Steady Integration be sure that all code despatched to the mainline is

manufacturing high quality, along with the assessments that

confirm the code. Latent code could by no means be executed in

manufacturing, however that does not cease it from being exercised in assessments.

We are able to stop the code being executed in manufacturing by utilizing a

Keystone Interface – guaranteeing the interface that

gives a path to the brand new characteristic is the very last thing we add to the code

base. Assessments can nonetheless examine the code in any respect ranges apart from that last

interface. In a well-designed system, such interface components must be

minimal and thus easy so as to add with a brief programming episode.

Utilizing Darkish Launching we will take a look at some modifications in

manufacturing earlier than we make them seen to the person. This method is

helpful for assessing the impression on efficiency,

Keystones cowl most instances of latent code, however for events the place

that is not potential we use Function Flags.

Function flags are checked at any time when we’re about to execute latent code,

they’re set as a part of the surroundings, maybe in an

environment-specific configuration file. That approach the latent code may be

energetic for testing, however disabled in manufacturing. In addition to enabling

Steady Integration, characteristic flags additionally make it simpler for runtime

switching for A/B testing and Canary Releases. We then ensure we take away this logic promptly as soon as a

characteristic is absolutely launched, in order that the flags do not litter the code

base.

Department By Abstraction is one other method for

managing latent code, which is especially helpful for big

infrastructural modifications inside a code base. Basically this creates an

inner interface to the modules which are being modified. The interface

can then route between outdated and new logic, progressively changing execution

paths over time. We have seen this achieved to change such pervasive components

as altering the persistence platform.

When introducing a brand new characteristic, we should always at all times be sure that we will

rollback in case of issues. Parallel Change (aka

expand-contract) breaks a develop into reversible steps. For instance, if

we rename a database area, we first create a brand new area with the brand new

identify, then write to each outdated and new fields, then copy information from the

exisitng outdated fields, then learn from the brand new area, and solely then take away

the outdated area. We are able to reverse any of those steps, which might not be

potential if we made such a change abruptly. Groups utilizing Steady

Integration usually look to interrupt up modifications on this approach, holding modifications

small and straightforward to undo.

Check in a Clone of the Manufacturing Atmosphere

The purpose of testing is to flush out, beneath managed

circumstances, any drawback that the system could have in

manufacturing. A big a part of that is the surroundings

inside which the manufacturing system will run. If we take a look at in a

totally different surroundings, each distinction ends in a danger that

what occurs beneath take a look at will not occur in manufacturing.

Consequently, we wish to arrange our take a look at surroundings to be

as precise a mimic of our manufacturing surroundings as

potential. Use the identical database software program, with the identical

variations, use the identical model of the working system. Put all

the suitable libraries which are within the manufacturing

surroundings into the take a look at surroundings, even when the system

would not really use them. Use the identical IP addresses and

ports, run it on the identical {hardware}.

Digital environments make it a lot simpler than it was previously to

do that. We run manufacturing software program in containers, and reliably construct

precisely the identical containers for testing, even in a developer’s

workspace. It is definitely worth the effort and price to do that, the value is

often small in comparison with looking down a single bug that crawled out of

the outlet created by surroundings mismatches.

Some software program is designed to run in a number of environments, corresponding to

totally different working methods and platform variations. The deployment

pipeline ought to organize for testing in all of those environments in

parallel.

A degree to care for is when the manufacturing surroundings is not as

good as the event surroundings. Will the manufacturing software program be

operating on machines linked with dodgy wifi, like smartphones? Then guarantee a take a look at

surroundings mimics poor community connections.

Everybody can see what’s occurring

Steady Integration is all about communication, so we

wish to be sure that everybody can simply see the state of the

system and the modifications which have been made to it.

One of the vital essential issues to speak is the

state of the mainline construct. CI Companies have dashboards that permit

everybody to see the state of any builds they’re operating. Typically they

hyperlink with different instruments to broadcast construct info to inner social

media instruments corresponding to Slack. IDEs usually have hooks into these mechanisms,

so builders may be alerted whereas nonetheless contained in the instrument they’re utilizing

for a lot of their work. Many groups solely ship out notifications for construct

failures, however I feel it is value sending out messages on success too.

That approach folks get used to the common indicators and get a way for the

size of the construct. To not point out the truth that it is good to get a

“nicely achieved” every single day, even when it is solely from a CI server.

Groups that share a bodily house usually have some sort of always-on

bodily show for the construct. Normally this takes the shape of a big

display exhibiting a simplified dashboard. That is significantly beneficial to

alert everybody to a damaged construct, usually utilizing the pink/inexperienced colours on

the mainline commit construct.

One of many older bodily shows I quite appreciated had been using pink

and inexperienced lava lamps. One of many options of a lava lamp is that after

they’re turned on for some time they begin to bubble. The thought was that

if the pink lamp got here on, the workforce ought to repair the construct earlier than it begins

to bubble. Bodily shows for construct standing usually acquired playful, including

some quirky persona to a workforce’s workspace. I’ve fond recollections of a

dancing rabbit.

In addition to the present state of the construct, these shows can present

helpful details about current historical past, which may be an indicator of

challenge well being. Again on the flip of the century I labored with a workforce who

had a historical past of being unable to create steady builds. We put a calendar

on the wall that confirmed a full yr with a small sq. for every day.

On daily basis the QA group would put a inexperienced sticker on the day if they’d

obtained one steady construct that handed the commit assessments, in any other case a pink

sq.. Over time the calendar revealed the state of the construct course of

exhibiting a gentle enchancment till inexperienced squares had been so frequent that the

calendar disappeared – its function fulfilled.

Automate Deployment

To do Steady Integration we’d like a number of environments, one to

run commit assessments, in all probability extra to run additional components of the deployment

pipeline. Since we’re transferring executables between these environments

a number of instances a day, we’ll wish to do that mechanically. So it is

essential to have scripts that can permit us to deploy the applying

into any surroundings simply.

With fashionable instruments for virtualization, containerization, and serverless we will go

additional. Not simply have scripts to deploy the product, but in addition scripts

to construct the required surroundings from scratch. This fashion we will begin

with a bare-bones surroundings that is accessible off-the-shelf, create the

surroundings we’d like for the product to run, set up the product, and run

it – all totally mechanically. If we’re utilizing characteristic flags to cover

work-in-progress, then these environments may be arrange with all of the

feature-flags on, so these options may be examined with all immanent interactions.

A pure consequence of that is that these identical scripts permit us to

deploy into manufacturing with related ease. Many groups deploy new code

into manufacturing a number of instances a day utilizing these automations, however even

if we select a much less frequent cadence, computerized deployment helps velocity

up the method and reduces errors. It is also an inexpensive possibility because it

simply makes use of the identical capabilities that we use to deploy into take a look at

environments.

If we deploy into manufacturing mechanically, one additional functionality we discover

helpful is automated rollback. Dangerous issues do occur infrequently, and

if smelly brown substances hit rotating steel, it is good to have the ability to

rapidly return to the final recognized good state. Having the ability to

mechanically revert additionally reduces a whole lot of the strain of deployment,

encouraging folks to deploy extra continuously and thus get new options

out to customers rapidly. Blue Inexperienced Deployment permits us

to each make new variations dwell rapidly, and to roll again equally rapidly

if wanted, by shifting visitors between deployed variations.

Automated Deployment make it simpler to arrange Canary Releases, deploying a brand new model of a

product to a subset of our customers to be able to flush out issues earlier than

releasing to the complete inhabitants.

Cellular purposes are good examples of the place it is important to

automate deployment into take a look at environments, on this case onto gadgets so

{that a} new model may be explored earlier than invoking the guardians of the

App Retailer. Certainly any device-bound software program wants methods to simply get new

variations on to check gadgets.

When deploying software program like this, bear in mind to make sure that model

info is seen. An about display ought to include a construct id that

ties again to model management, logs ought to make it straightforward to see which model

of the software program is operating, there must be some API endpoint that can

give model info.

Types of Integration

Up to now, I’ve described one method to method integration, but when it is

not common, then there should be different methods. As with something, any

classification I give has fuzzy boundaries, however I discover it helpful to assume

of three types of dealing with integration: Pre-Launch Integration, Function

Branches, and Steady Integration.

The oldest is the one I noticed in that warehouse within the 80’s –

Pre-Launch Integration. This sees integration as a part of

a software program challenge, a notion that could be a pure a part of a Waterfall Course of. In such a challenge work is split into

models, which can be achieved by people or small groups. Every unit is

a portion of the software program, with minimal interplay with different

models. These models are constructed and examined on their very own (the unique use of

the time period “unit take a look at”). Then as soon as the models are prepared, we combine them

into the ultimate product. This integration happens as soon as, and is adopted by

integration testing, and on to a launch. Thus if we consider the work, we

see two phases, one the place everybody works in parallel on options,

adopted by a single stream of effort at integration.

The frequency of integration in

this type is tied to the frequency of launch, often main variations of

the software program, often measured in months or years. These groups will use a

totally different course of for pressing bug fixes, to allow them to be launched

individually to the common integration schedule.

One of the vital common approaches to integration lately is to make use of

Function Branches. On this type

options are assigned to people or small groups, a lot as models within the

older method. Nonetheless, as an alternative of ready till all of the models are achieved

earlier than integrating, builders combine their characteristic into the mainline

as quickly because it’s achieved. Some groups will launch to manufacturing after every

characteristic integration, others desire to batch up a number of options for

launch.

Groups utilizing characteristic branches will often count on everybody to drag from

mainline usually, however that is semi-integration. If Rebecca and I

are engaged on separate options, we would pull from mainline every single day,

however we do not see one another’s modifications till one in every of us completes our

characteristic and integrates, pushing it to the mainline. Then the opposite will

see that code on their subsequent pull, integrating it into their working copy.

Thus after every characteristic is pushed to mainline, each different developer will

then do integration work to mix this newest mainline push with

their very own characteristic department.

That is solely semi-integration as a result of every developer combines the

modifications on mainline to their very own native department. Full integration cannot

occur till a developer pushes their modifications, inflicting one other spherical of

semi-integrations. Even when Rebecca and I each pull the identical modifications from

mainline, we have solely built-in with these modifications, not with one another’s

branches.

With Steady Integration, every single day we’re all pushing our modifications

to the mainline and pulling everybody else’s modifications into our personal work.

This results in many extra bouts of integration work, however every bout is far

smaller. It is a lot simpler to mix a number of hours work on a code base than

to mix a number of days.

Advantages of Steady Integration

When discussing the relative deserves of the three types of integration,

a lot of the dialogue is actually concerning the frequency of integration. Each Pre-Launch

Integration and Function Branching can function at totally different frequencies and

it is potential to alter integration frequency with out altering the type

of integration. If we’re utilizing Pre-Launch Integration, there is a massive

distinction between month-to-month releases and annual releases. Function Branching

often works at a better frequency, as a result of integration happens when every

characteristic is individually pushed to mainline, versus ready to batch

a bunch of models collectively. If a workforce is doing Function Branching and all

its options are lower than a day’s work to construct, then they’re

successfully the identical as Steady Integration. However Steady Integration

is totally different in that it is outlined as a high-frequency type.

Steady Integration makes some extent of setting integration frequency as a

goal in itself, and never binding it to characteristic completion or launch

frequency.

It thus follows that the majority groups can see a helpful enchancment within the

elements I am going to focus on under by rising their frequency with out altering

their type. There are important advantages to decreasing the scale of

options from two months to 2 weeks. Steady Integration has the

benefit of setting high-frequency integration because the baseline, setting

habits and practices that make it sustainable.

Diminished danger of supply delays

It’s extremely laborious to estimate how lengthy it takes to do a posh

integration. Typically it may be a battle to merge in git, however then

all works nicely. Different instances it may be a fast merge, however a delicate

integration bug takes days to search out and repair. The longer the time between

integrations, the extra code to combine, the longer it takes – however

what’s worse is the rise in unpredictability.

This all makes pre-release integration a particular type of nightmare.

As a result of the mixing is likely one of the final steps earlier than launch, time is

already tight and the stress is on. Having a hard-to-predict part

late within the day means we have now a major danger that is very troublesome

to mitigate. That was why my 80’s reminiscence is so robust, and it is hardly the

solely time I’ve seen initiatives caught in an integration hell, the place each

time they repair an integration bug, two extra pop up.

Any steps to extend integration frequency lowers this danger. The

much less integration there’s to do, the much less unknown time there’s earlier than a

new launch is prepared. Function Branching helps by pushing this

integration work to particular person characteristic streams, in order that, if left alone,

a stream can push to mainline as quickly because the characteristic is prepared.

However that left alone level is essential. If anybody else pushes

to mainline, then we introduce some integration work earlier than the characteristic

is completed. As a result of the branches are remoted, a developer engaged on one

department would not have a lot visibility about what different options could push,

and the way a lot work could be concerned to combine them. Whereas there’s a

hazard that top precedence options can face integration delays, we will

handle this by stopping pushes of lower-priority options.

Steady Integration successfully eliminates supply danger. The

integrations are so small that they often proceed with out remark. An

awkward integration could be one which takes various minutes to

resolve. The very worst case could be battle that causes somebody to

restart their work from scratch, however that may nonetheless be lower than a

day’s work to lose, and is thus not going to be one thing that is possible

to bother a board of stakeholders. Moreover we’re doing integration

usually as we develop the software program, so we will face issues whereas we

have extra time to cope with them and may apply the best way to resolve

them.

Even when a workforce is not releasing to manufacturing usually, Steady

Integration is essential as a result of it permits everybody to see precisely what

the state of the product is. There isn’t any hidden integration efforts that

should be achieved earlier than launch, any effort in integration is already

baked in.

Much less time wasted in integration

I’ve not seen any critical research that measure how time spent on

integration matches the scale of integrations, however my anecdotal

proof strongly means that the connection is not linear. If

there’s twice as a lot code to combine, it is extra prone to be 4

instances as lengthy to hold out the mixing. It is quite like how we’d like

three strains to totally join three nodes, however six strains to attach 4

of them. Integration is all about connections, therefore the non-linear

improve, one which’s mirrored within the expertise of my colleagues.

In organizations which are utilizing characteristic branches, a lot of this misplaced

time is felt by the person. A number of hours spent making an attempt to rebase on

a giant change to mainline is irritating. A number of days spent ready for a

code overview on a completed pull request, which one other massive mainline

change through the ready interval is much more irritating. Having to place

work on a brand new characteristic apart to debug an issue present in an integration

take a look at of characteristic completed two weeks in the past saps productiveness.

After we’re doing Steady Integration, integration is usually a

non-event. I pull down the mainline, run the construct, and push. If

there’s a battle, the small quantity of code I’ve written is recent in

my thoughts, so it is often straightforward to see. The workflow is common, so we’re

practiced at it, and we’re incentives to automate it as a lot as

potential.

Like many of those non-linear results, integration can simply grow to be

a entice the place folks be taught the mistaken lesson. A troublesome integration could

be so traumatic {that a} workforce decides it ought to do integrations much less

usually, which solely exacerbates the issue sooner or later.

What’s occurring right here is that we seeing a lot nearer collaboration

between the members of the workforce. Ought to two builders make selections

that battle, we discover out after we combine. So the much less time

between integrations, the much less time earlier than we detect the battle, and

we will cope with the battle earlier than it grows too massive. With high-frequency

integration, our supply management system turns into a communication channel,

one that may talk issues that may in any other case be unsaid.

Much less Bugs

Bugs – these are the nasty issues that destroy confidence and mess up

schedules and reputations. Bugs in deployed software program make customers offended

with us. Bugs cropping up throughout common improvement get in our approach,

making it tougher to get the remainder of the software program working appropriately.

Steady Integration would not eliminate bugs, but it surely does make them

dramatically simpler to search out and take away. That is much less due to the

high-frequency integration and extra as a result of important introduction of

self-testing code. Steady Integration would not work with out

self-testing code as a result of with out first rate assessments, we will not hold a wholesome

mainline. Steady Integration thus institutes a daily routine of

testing. If the assessments are insufficient, the workforce will rapidly discover, and

can take corrective motion. If a bug seems as a consequence of a semantic battle,

it is simple to detect as a result of there’s solely a small quantity of code to be

built-in. Frequent integrations additionally work nicely with Diff Debugging, so even a bug observed weeks later may be

narrowed all the way down to a small change.

Bugs are additionally cumulative. The

extra bugs we have now, the tougher it’s to take away each. That is partly

as a result of we get bug interactions, the place failures present as the results of

a number of faults – making every fault tougher to search out. It is also

psychological – folks have much less vitality to search out and eliminate bugs when

there are lots of of them. Thus self-testing code strengthened by Steady

Integration has one other exponential impact in decreasing the issues

trigger by defects.

This runs into one other phenomenon that many

folks discover counter-intuitive. Seeing how usually introducing a change

means introducing bugs, folks conclude that to have excessive reliability

software program they should decelerate the discharge price. This was firmly

contradicted by the DORA analysis

program led by Nicole Forsgren. They discovered that elite groups

deployed to manufacturing extra quickly, extra continuously, and had a

dramatically decrease incidence of failure once they made these modifications.

The analysis additionally finds that groups have greater ranges of efficiency

once they have three or fewer energetic branches within the utility’s code

repository, merge branches to mainline a minimum of as soon as a day, and don’t have

code freezes or integration phases.

Allows Refactoring for sustained productiveness

Most groups observe that over time, codebases deteriorate. Early

selections had been good on the time, however are not optimum after six

month’s work. However altering the code to include what the workforce has

realized means introducing modifications deep within the present code,

which ends up in troublesome merges that are each time-consuming and full

of danger. Everybody recollects that point somebody made what could be a great

change for the long run, however brought about days of effort breaking different folks’s

work. Given that have, no person needs to transform the construction of

present code, although it is now awkward for everybody to construct on,

thus slowing down supply of latest options.

Refactoring is an important method to attenuate and certainly reverse

this technique of decay. A workforce that refactors usually has a

disciplined method to enhance the construction of a code base by utilizing

small, behavior-preserving transformations of the code. These

traits of the transformations

drastically scale back their possibilities of introducing bugs, and

they are often achieved rapidly, particularly when supported by a basis of

self-testing code. Making use of refactoring at each alternative, a workforce can

enhance the construction of an present codebase, making it simpler and

sooner so as to add new capabilities.

However this completely satisfied story may be torpedoed by integration woes. A two week

refactoring session could drastically enhance the code, however lead to lengthy

merges as a result of everybody else has been spending the final two weeks

working with the outdated construction. This raises the prices of refactoring to

prohibitive ranges. Frequent integration solves this dilemma by guaranteeing

that each these doing the refactoring and everybody else are usually

synchronizing their work. When utilizing Steady Integration, if somebody

makes intrusive modifications to a core library I am utilizing, I solely should

alter a number of hours of programming to those modifications. In the event that they do one thing

that clashes with the course of my modifications, I do know immediately, so

have the chance to speak to them so we will determine a greater approach

ahead.

To date on this article I’ve raised a number of counter-intuitive notions about

the deserves of high-frequency integration: that the extra usually we

combine, the much less time we spend integrating, and that frequent

integration results in much less bugs. Right here is maybe a very powerful

counter-intuitive notion in software program improvement: that groups that spend a

lot of effort holding their code base wholesome ship options sooner and cheaper. Time

invested in writing assessments and refactoring delivers spectacular returns in

supply velocity, and Steady Integration is a core a part of making that

work in a workforce setting.

Launch to Manufacturing is a enterprise choice

Think about we’re demonstrating some newly constructed characteristic to a

stakeholder, and she or he reacts by saying – “that is actually cool, and would

make a giant enterprise impression. How lengthy earlier than we will make this dwell?” If

that characteristic is being proven on an unintegrated department, then the reply

could also be weeks or months, significantly if there’s poor automation on the

path to manufacturing. Steady Integration permits us to keep up a

Launch-Prepared Mainline, which suggests the

choice to launch the newest model of the product into manufacturing is

purely a enterprise choice. If the stakeholders need the newest to go

dwell, it is a matter of minutes operating an automatic pipeline to make it

so. This enables the shoppers of the software program larger management of when

options are launched, and encourages them to collaborate extra carefully

with the event workforce

Steady Integration and a Launch-Prepared Mainline removes one of many largest

boundaries to frequent deployment. Frequent deployment is effective as a result of

it permits our customers to get new options extra quickly, to provide extra

fast suggestions on these options, and usually grow to be extra

collaborative within the improvement cycle. This helps break down the

boundaries between clients and improvement – boundaries which I imagine

are the most important boundaries to profitable software program improvement.

After we ought to not use Steady Integration

All these advantages sound quite juicy. However people as skilled (or

cynical) as I’m are at all times suspicious of a naked record of advantages. Few

issues come with no price, and selections about structure and course of

are often a matter of trade-offs.

However I confess that Steady Integration is a kind of uncommon instances

the place there’s little draw back for a dedicated and skillful workforce to put it to use. The price

imposed by sporadic integration is so nice, that just about any workforce can

profit by rising their integration frequency. There may be some restrict to

when the advantages cease piling up, however that restrict sits at hours quite

than days, which is strictly the territory of Steady Integration. The

interaction between self-testing code, Steady Integration, and

Refactoring is especially robust. We have been utilizing this method for 2

a long time at Thoughtworks, and our solely query is the best way to do it extra

successfully – the core method is confirmed.

However that does not imply that Steady Integration is for everybody. You

would possibly discover that I stated that “there’s little draw back for a

dedicated and skillful workforce to put it to use”. These two adjectives

point out the contexts the place Steady Integration is not a great match.

By “dedicated”, I imply a workforce that is working full-time on a product. A

good counter-example to this can be a classical open-source challenge, the place

there’s one or two maintainers and lots of contributors. In such a state of affairs

even the maintainers are solely doing a number of hours every week on the challenge,

they do not know the contributors very nicely, and do not have good visibility

for when contributors contribute or the requirements they need to comply with when

they do. That is the surroundings that led to a characteristic department workflow and

pull-requests. In such a context Steady Integration is not believable,

though efforts to extend the mixing frequency can nonetheless be

beneficial.

Steady Integration is extra fitted to workforce working full-time on a

product, as is often the case with business software program. However there’s

a lot center floor between the classical open-source and the full-time

mannequin. We have to use our judgment about what integration coverage to make use of

that matches the dedication of the workforce.

The second adjective appears on the ability of the workforce in following the

essential practices. If a workforce makes an attempt Steady

Integration with no robust take a look at suite, they’ll run into all types of

hassle as a result of they do not have a mechanism for screening out bugs. If they do not

automate, integration will take too lengthy, interfering with the circulation of

improvement. If people aren’t disciplined about guaranteeing their pushes to

mainline are achieved with inexperienced builds, then the mainline will find yourself

damaged on a regular basis, getting in the way in which of everybody’s work.

Anybody who’s contemplating introducing Steady Integration has to

bear these abilities in thoughts. Instituting Steady Integration with out

self-testing code will not work, and it’ll additionally give a inaccurate

impression of what Steady Integration is like when it is achieved nicely.

That stated, I do not assume the ability calls for are significantly laborious. We do not

want rock-star builders to get this course of working in a workforce. (Certainly

rock-star builders are sometimes a barrier, as individuals who consider themselves

that approach often aren’t very disciplined.) The talents for these technical practices

aren’t that onerous to be taught, often the issue is discovering a great instructor,

and forming the habits that crystallize the self-discipline. As soon as the workforce will get

the cling of the circulation, it often feels comfy, clean – and quick.

Frequent Questions

The place did Steady Integration come from?

Steady Integration was developed as a apply by Kent Beck as

a part of Excessive Programming within the Nineteen Nineties. At the moment pre-release

integration was the norm, with launch frequencies usually measured in

years. There had been a normal push to iterative improvement, with

sooner launch cycles. However few groups had been considering in weeks between

releases. Kent outlined the apply, developed it with initiatives he

labored on, and established the way it interacted with the

different key practices upon which it depends.

Microsoft had been recognized for doing each day builds (often

in a single day), however with out the testing routine or the concentrate on fixing

defects which are such essential components of Steady

Integration.

Some folks credit score Grady Booch for coining the time period, however he solely

used the phrase as an offhand description in a single sentence in his

object-oriented design e-book. He didn’t deal with it as an outlined apply,

certainly it did not seem within the index.

What’s the distinction between Steady Integration and Trunk-Based mostly Improvement?

As CI Companies turned common, many individuals used

them to run common builds on characteristic branches. This, as defined

above, is not Steady Integration in any respect, but it surely led to many individuals

saying (and considering) they had been doing Steady Integration once they

had been doing one thing considerably totally different, which causes a whole lot of confusion.

Some people determined to deal with this Semantic Diffusion by coining a brand new time period: Trunk-Based mostly

Improvement. Typically I see this as a synonym to Steady Integration

and acknowledge that it would not are inclined to endure from confusion with

“operating Jenkins on our characteristic branches”. I’ve learn some folks

making an attempt to formulate some distinction between the 2, however I discover these

distinctions are neither constant nor compelling.

I do not use the time period Trunk-Based mostly Improvement, partly as a result of I do not

assume coining a brand new identify is an efficient method to counter semantic diffusion,

however principally as a result of renaming this system rudely erases the work of

these, particularly Kent Beck, who championed and developed Steady

Integration at first.

Regardless of me avoiding the time period, there’s a whole lot of good info

about Steady Integration that is written beneath the flag of

Trunk-Based mostly Improvement. Specifically, Paul Hammant has written loads

of wonderful materials on his web site.

Can we run a CI Service on our characteristic branches?

The straightforward reply is “sure – however you are not doing Steady

Integration”. The important thing precept right here is that “Everybody Commits To the

Mainline Each Day”. Doing an automatic construct on characteristic branches is

helpful, however it’s only semi-integration.

Nonetheless it’s a frequent confusion that utilizing a daemon construct on this

approach is what Steady Integration is about. The confusion comes from

calling these instruments Steady Integration Companies, a greater time period

could be one thing like “Steady Construct Companies”. Whereas utilizing a CI

Service is a helpful support to doing Steady Integration, we should not

confuse a instrument for the apply.

What’s the distinction between Steady Integration and Steady

Supply?

The early descriptions of Steady Integration centered on the

cycle of developer integration with the mainline within the workforce’s

improvement surroundings. Such descriptions did not speak a lot concerning the

journey from an built-in mainline to a manufacturing launch. That

doesn’t suggest they weren’t in folks’s minds. Practices like “Automate

Deployment” and “Check in a Clone of the Manufacturing Atmosphere” clearly