OpenAI lastly unveiled its rumored “Strawberry” AI language mannequin on Thursday, claiming vital enhancements in what it calls “reasoning” and problem-solving capabilities over earlier giant language fashions (LLMs). Formally named “OpenAI o1,” the mannequin household will initially launch in two types, o1-preview and o1-mini, accessible as we speak for ChatGPT Plus and sure API customers.

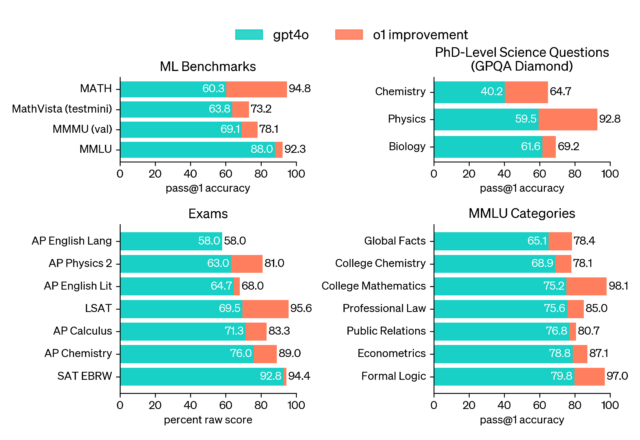

OpenAI claims that o1-preview outperforms its predecessor, GPT-4o, on a number of benchmarks, together with aggressive programming, arithmetic, and “scientific reasoning.” Nevertheless, individuals who have used the mannequin say it doesn’t but outclass GPT-4o in each metric. Different customers have criticized the delay in receiving a response from the mannequin, owing to the multi-step processing occurring behind the scenes earlier than answering a question.

In a uncommon show of public hype-busting, OpenAI product supervisor Joanne Jang tweeted, “There’s a whole lot of o1 hype on my feed, so I am nervous that it could be setting the flawed expectations. what o1 is: the primary reasoning mannequin that shines in actually exhausting duties, and it will solely get higher. (I am personally psyched in regards to the mannequin’s potential & trajectory!) what o1 is not (but!): a miracle mannequin that does all the things higher than earlier fashions. you could be disenchanted if that is your expectation for as we speak’s launch—however we’re working to get there!”

OpenAI experiences that o1-preview ranked within the 89th percentile on aggressive programming questions from Codeforces. In arithmetic, it scored 83 % on a qualifying examination for the Worldwide Arithmetic Olympiad, in comparison with GPT-4o’s 13 %. OpenAI additionally states, in a declare that will later be challenged as individuals scrutinize the benchmarks and run their very own evaluations over time, o1 performs comparably to PhD college students on particular duties in physics, chemistry, and biology. The smaller o1-mini mannequin is designed particularly for coding duties and is priced at 80 % lower than o1-preview.

OpenAI attributes o1’s developments to a brand new reinforcement studying (RL) coaching strategy that teaches the mannequin to spend extra time “pondering by way of” issues earlier than responding, just like how “let’s assume step-by-step” chain-of-thought prompting can enhance outputs in different LLMs. The brand new course of permits o1 to strive totally different methods and “acknowledge” its personal errors.

AI benchmarks are notoriously unreliable and straightforward to recreation; nevertheless, unbiased verification and experimentation from customers will present the complete extent of o1’s developments over time. It is value noting that MIT Analysis confirmed earlier this 12 months that a few of the benchmark claims OpenAI touted with GPT-4 final 12 months had been faulty or exaggerated.

A blended bag of capabilities

OpenAI demos “o1” appropriately counting the variety of Rs within the phrase “strawberry.”

Amid many demo movies of o1 finishing programming duties and fixing logic puzzles that OpenAI shared on its web site and social media, one demo stood out as maybe the least consequential and least spectacular, however it could develop into probably the most talked about as a consequence of a recurring meme the place individuals ask LLMs to rely the variety of R’s within the phrase “strawberry.”

Because of tokenization, the place the LLM processes phrases in information chunks referred to as tokens, most LLMs are usually blind to character-by-character variations in phrases. Apparently, o1 has the self-reflective capabilities to determine find out how to rely the letters and supply an correct reply with out person help.

Past OpenAI’s demos, we have seen optimistic however cautious hands-on experiences about o1-preview on-line. Wharton Professor Ethan Mollick wrote on X, “Been utilizing GPT-4o1 for the final month. It’s fascinating—it doesn’t do all the things higher nevertheless it solves some very exhausting issues for LLMs. It additionally factors to a whole lot of future positive factors.”

Mollick shared a hands-on put up in his “One Helpful Factor” weblog that particulars his experiments with the brand new mannequin. “To be clear, o1-preview doesn’t do all the things higher. It isn’t a greater author than GPT-4o, for instance. However for duties that require planning, the adjustments are fairly giant.”

Mollick provides the instance of asking o1-preview to construct a educating simulator “utilizing a number of brokers and generative AI, impressed by the paper under and contemplating the views of academics and college students,” then asking it to construct the complete code, and it produced a end result that Mollick discovered spectacular.

Mollick additionally gave o1-preview eight crossword puzzle clues, translated into textual content, and the mannequin took 108 seconds to unravel it over many steps, getting all the solutions right however confabulating a selected clue Mollick didn’t give it. We suggest studying Mollick’s complete put up for a superb early hands-on impression. Given his expertise with the brand new mannequin, it seems that o1 works similar to GPT-4o however iteratively in a loop, which is one thing that the so-called “agentic” AutoGPT and BabyAGI initiatives experimented with in early 2023.

Is that this what may “threaten humanity?”

Talking of agentic fashions that run in loops, Strawberry has been topic to hype since final November, when it was initially often known as Q* (Q-star). On the time, The Info and Reuters claimed that, simply earlier than Sam Altman’s temporary ouster as CEO, OpenAI staff had internally warned OpenAI’s board of administrators a couple of new OpenAI mannequin referred to as Q* that would “threaten humanity.”

In August, the hype continued when The Info reported that OpenAI confirmed Strawberry to US nationwide safety officers.

We have been skeptical in regards to the hype round Q* and Strawberry because the rumors first emerged, as this writer famous final November, and Timothy B. Lee coated completely in an glorious put up about Q* from final December.

So despite the fact that o1 is out, AI trade watchers ought to word how this mannequin’s impending launch was performed up within the press as a harmful development whereas not being publicly downplayed by OpenAI. For an AI mannequin that takes 108 seconds to unravel eight clues in a crossword puzzle and hallucinates one reply, we are able to say that its potential hazard was possible hype (for now).

Controversy over “reasoning” terminology

It is no secret that some individuals in tech have points with anthropomorphizing AI fashions and utilizing phrases like “pondering” or “reasoning” to explain the synthesizing and processing operations that these neural community methods carry out.

Simply after the OpenAI o1 announcement, Hugging Face CEO Clement Delangue wrote, “As soon as once more, an AI system shouldn’t be ‘pondering,’ it is ‘processing,’ ‘operating predictions,’… identical to Google or computer systems do. Giving the misunderstanding that expertise methods are human is simply low cost snake oil and advertising and marketing to idiot you into pondering it is extra intelligent than it’s.”

“Reasoning” can be a considerably nebulous time period since, even in people, it is tough to outline precisely what the time period means. Just a few hours earlier than the announcement, unbiased AI researcher Simon Willison tweeted in response to a Bloomberg story about Strawberry, “I nonetheless have bother defining ‘reasoning’ when it comes to LLM capabilities. I’d be excited about discovering a immediate which fails on present fashions however succeeds on strawberry that helps display the that means of that time period.”

Reasoning or not, o1-preview at the moment lacks some options current in earlier fashions, reminiscent of internet searching, picture era, and file importing. OpenAI plans so as to add these capabilities in future updates, together with continued improvement of each the o1 and GPT mannequin sequence.

Whereas OpenAI says the o1-preview and o1-mini fashions are rolling out as we speak, neither mannequin is accessible in our ChatGPT Plus interface but, so we now have not been in a position to consider them. We’ll report our impressions on how this mannequin differs from different LLMs we now have beforehand coated.